Exocortical Concepts

Advancing Persistent AI Cognition Beyond LLM Architectural Limits

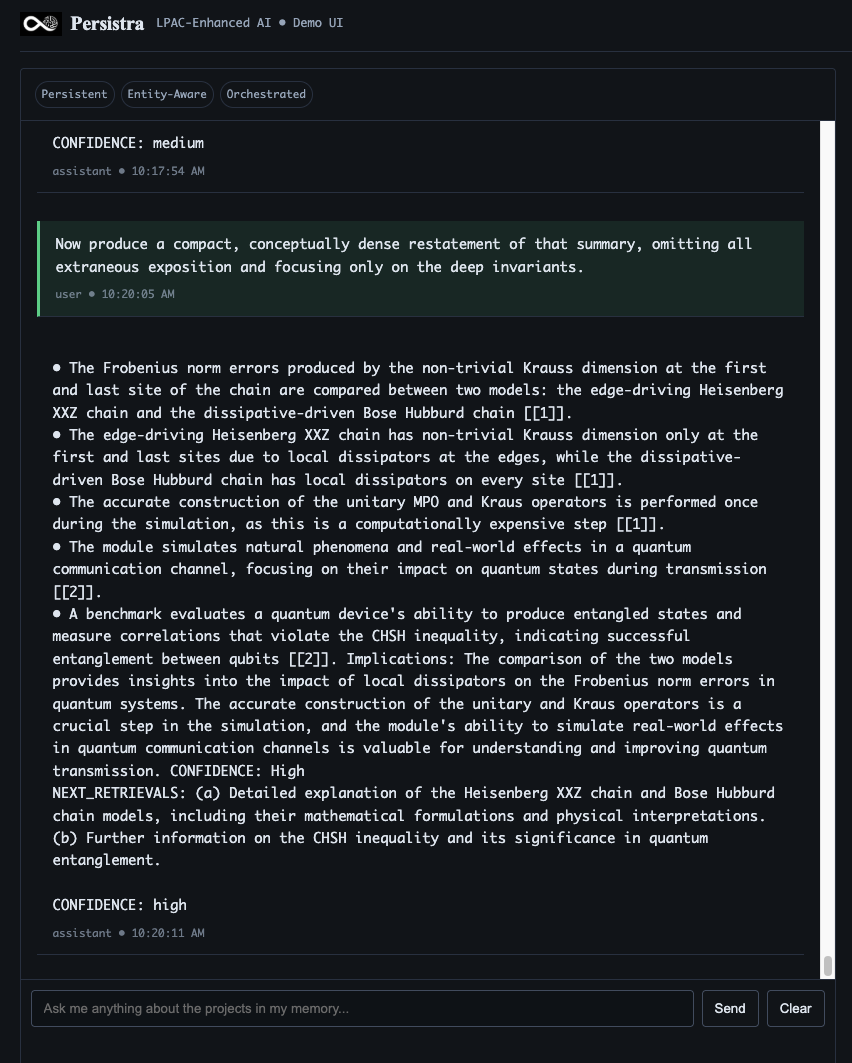

AI With Long-Term Project Memory

AI that builds expertise over time, learns your institution, and never resets.

The development of Persistra, an exocortical layer that sits outside foundational models, giving AI systems:

- Long-term memory

- Stable identity

- Project-level reasoning over time

- Is not an LLM

- Model-agnostic, works with frontier and local LLMs

LLMs answer questions

Persistra lets them build and retain expertise

THE CHALLENGE

Why today's AI can't truly learn, reason, or build expertise

Modern AI systems built on transformer architectures are fundementally:

- Stateless- no durable memory across sessions

-

Ephemeral - knowledge dissolves after each inference

- Non-learning post-deployment - cannot accumulate institutional expertise

- Identity-unstable - halllucination, role drift, changing personas

-

Task-bound - no continuity across weeks or projects

- Contest-limited - bounded by token windows

-

Non-Agentic - cannot maintain goals or plans over time

These are not "bugs"

They are architectural constraints

Meanwhile:

- Scaling laws are showing diminishing returns

- Larger context window don't create cognition, just more buffering

- RAG is sophisticated retrieval, not memory, learning or planning

- Enterprises need systems that remember, adapt, and stbilize over months or years

The next breakthrough in AI may not "a bigger model."

But a new architecture.

If intelligence requires continuity, memory, reflection, and stable identity, no stateless system can get there alone.

OUR APPROACH

- Long-term project intelligence

- Adding the missing half of intelligence: the ability to carry knowledge forward across sessions, accumulate expertise and maintain project continuity.

Instead of forcing human-like thinking inside a next-token engine, we seperate the functions.

- the "Persistra Exocortex"

- LLM handles language and pattern matching

- Persistra handles memory, identity, continuity, reasoning

- The model stays exactly what it is good at: a powerful pattern matching and language rendering cortex.

- Persistra supplies what's missing: a persistent project-aware substrate that survives time.

LLM (Claude, Qwen, GPT)

- Pattern matching

- Language generation

- General world knowledge

- Fast adaptation per prompt

- No cross-session continuity

- Brilliant at tasks

Persistra Exocortex

- Thinking/Multi-Step Reasoning

- Project and goal tracking

- Cross-section continuity

- Reflection and self-correction

- Institution-specific and person-specific expertise

THE RESULTS:

Persistent, identity-stable, context-independent cognition.

Persistra provides:

-

Project-level cognition rather than isolated tasks

-

Long-term memory across sessions, days, months

-

Stable agent identity grounded in semantic memory

-

Continuous reasoning loops not limited by tokens

-

Cross-session knowledge accumulation

-

Integration of massive datasets beyond token limits

-

A "world-model-like" substrate constructed outside the LLM

Long-term memory and project awareness that survives time.

What Persistra Enables (at a glance)

Persistra wraps around an LLM and provides a persistent project intelligence layer that:

- Stores, organizes, and retrieves long-term knowledge

- Tracks ongoing work across time

- Synthesizes new insights from accumulated information

- Ensures consistent reasoning across sessions

- Keeps your enterprise-specific data private and continuously available

- No retraining.

- No huge context windows.

- No manual RAG pipelines.

Persistra enables capabilities impossible for LLMs even at 1M+token windows:

1. Cross-Session Continuity

Maintains understanding across thousands of interactions and evolving projects.

2. Accumulated Knowledge

Learns from past interactions and domain data without retraining the underlying model.

3. Stable Identity

Behaves consistently because identity is anchored in a persistent semantic graph, not prompt hacks.

4. Multi-Step Reasoning

Runs planning and reasoning loops independent of token windows and chat history limits.

5. Domain and institutional Expertise

Builds organization-specific knowledge (underwriting policies, treaties, research programs, etc.) that compounds over time.

6. Cognitive Reflection

Can revisit prior reasoning, correct earlier conclusions, and update its own internal view of a problem.

LLMs become what they should have been from the start: syntactic front-ends to a real cognitive system.

Prototype Evidence

Persistra: prototype in progress

Persistra: prototype working toward:

-

Weeks-long memory persistence

-

Knowledge synthesis across documents + time

-

Project continuity across thousands of tokens and multiple sessions

-

Identity stability and not achievable with prompts aline

-

Large-scale semantic memory (tens of thousands of graph nodes and growing)

-

Long reasoning chains without hallucination drift

-

Exocortical cognition integrated with frontier LLMs - a work in progress, an extensible architecture.

Why Standard Benchmarks Fall Short

Standard benchmarks (MMLU, HumanEval, GSM8K, etc.) will not work with Persistra because they test:

- Single-shot reasoning

- Knowledge retrieval

- Pattern matching

- Short Form Task completion

They cannot measure:

- Cross-session learning

- Identity stability over time

- Project-level coherence

- Memory consolidation and knowledge accumulation

- Goal persistence and multi-week planning

- Cognitive reflection and correction of earlier answers

It's like testing a human's intelligence with a single exam, while ignoring how they develop a PhD thesis over five years.

Developing New Benchmarks

The Persistra Cognitive Persistence Benchmark Suite (PCBS)

Designing longitudinal benchmarks that match what Persistra is built to do:

The Multi-Session Novel Writing Challenge

What it tests: Project-level coherence, character consistency, plot continuity across sessions

The Longitudinal Software Project Challenge

What it tests: Architectural vision persistence, code consistency, project understanding

The Research Synthesis Marathon

What it tests: Knowledge accumulation, learning, synthesis across time

The Identity Stability Test

What it tests: Persistent identity, role consistency, behavioral stability

The Reflective Learning Challenge

What it tests: Self-correction, learning from mistakes, metacognition

The Multi-Week Goal Pursuit Challenge

What it tests: Long-term planning, goal maintenance, strategic thinking over time

We welcome collaboration with independent labs and institutions designing their own persistence-oriented evaluations.

research focus areas

Local-First Intelligence

- Long-term agent identity independent of inference boundaries

- Memory Continuity Systems

- Semantic graphs enabling crosss-session accumulated knowledge

- Exocortical planning loops

- Multi-Step reasoning not limited by token windows

Perisistent Identity Architecture

- Enterprise safe cognition on local hardware without model retraining

- Meta-programming and autonomous improvement

The distinction involves architectural reconceptualization rather than incremental improvements. Traditional approaches treat memory as external retrieval mechanisms, while exocortical systems integrate memory persistence as foundational infrastructure enabling genuine cognitive evolution over extended temporal periods.

Research Development

19

Patents Pending

3+

Years Research

Multiple

Prototype Systems

Our work focuses on:

- Local First Intelligence

- Long-term memory architectures

- Persistent agent identity

- Exocortical planning loops

- Safe self-modification and meta-programming under strict boundaries

For Researchers/Academics

We invite researchers in:

- Cognitive architectures

- Neurosymbolic systems

- Persistent agent identity

- Long-term memory architectures

- World-modeling

- Embedded AI safety

- Hybrid symbolic-neural planning

We are actively seeking partners exploring persistent cognition.

Persistra: a new category of AI system.

Whether you’re:

- an academic or research lab

- an enterprise partner

- an investor

- a podcaster

- an engineer

- or simply working on the same frontier

To explore:

- Independent validation of exocortical cognition

- Joint publications

- Benchmark creation for persistent AI

- Integration with world-model researchers

- Enterprise pilot program

For Enterprises

If your organization depends on cumulative expertise - underwriting, treaty analysis, research, intelligence, complex operations - Persistra can act as a persistent cognitive partner:

- Learns from your historical decisions and data

- Remembers every case, every edge condition, every exception

- Maintains continuity as teams, tools and models change

-

Deployable on-prem or in controlled environments for regulatory and data-sovereignty needs

We are currently exploring pilot programs with a small number of partners.For Investors

Persistra is not another SaaS wrapper on top of frontier models, it is infrastructure for the post-LLM era: - Model-agnostic substrate

- Strong IP moat (exocortical architecture + persistence)

- Directly aligned with emerging world-model and agentic system research

-

Built to complement, not compete with, foundation model providers

We are interested in partners who see persistent cognition as the next platform layer.

Contact: inquiries@exocorticalconcepts.com

© 2025 Exocortical Concepts, Inc. All rights reserved

Aspects Patent Pending - This website contains forward-looking statements and proprietary information.

© 2025 NVIDIA, the NVIDIA logo, are trademarks and/or registered trademarks of NVIDIA Corporation in the U.S. and other countries.

Advancing Persistent AI Cognition